Performance Testing

What is performance testing?

- It is a type of non-functional testing.

- Performance testing is testing that is performed, to determine how fast some aspect of a system performs under a particular workload. Performance testing measures the quality attributes of the system, such as scalability, reliability and resource usage.

- The goal of performance testing is not to find bugs but to eliminate performance bottlenecks.

- It can serve different purposes like it can demonstrate that the system meets performance criteria.

- It can compare two systems to find which performs better. Or it can measure what part of the system or workload causes the system to perform badly.

- This process can involve quantitative tests done in a lab, such as measuring the response time or the number of MIPS (millions of instructions per second) at which a system functions.

Why do performance testing?

Performance testing is done to provide stakeholders with information about their application regarding speed, stability and scalability. More importantly, performance testing uncovers what needs to be improved before the product goes to market. Without performance testing, software is likely to suffer from issues such as: running slow while several users use it simultaneously, inconsistencies across different operating systems and poor usability. Performance testing will determine whether or not their software meets speed, scalability and stability requirements under expected workloads. Applications sent to market with poor performance metrics due to non existent or poor performance testing are likely to gain a bad reputation and fail to meet expected sales goals. Also, mission critical applications like space launch programs or life saving medical equipments should be performance tested to ensure that they run for a long period of time without deviations.

Types of performance testing

- Load testing - checks the application’s ability to perform under anticipated user loads. The objective is to identify performance bottlenecks before the software application goes live.

- Stress testing - involves testing an application under extreme workloads to see how it handles high traffic or data processing .The objective is to identify breaking point of an application.

- Endurance testing - is done to make sure the software can handle the expected load over a long period of time.

- Spike testing - tests the software’s reaction to sudden large spikes in the load generated by users.

- Volume testing - Under Volume Testing large no. of. Data is populated in database and the overall software system’s behavior is monitored. The objective is to check software application’s performance under varying database volumes.

- Scalability testing - The objective of scalability testing is to determine the software application’s effectiveness in “scaling up” to support an increase in user load. It helps plan capacity addition to your software system.

Common Performance Problems

Most performance problems revolve around speed, response time, load time and poor scalability. Speed is often one of the most important attributes of an application. A slow running application will lose potential users. Performance testing is done to make sure an app runs fast enough to keep a user’s attention and interest. Take a look at the following list of common performance problems and notice how speed is a common factor in many of them:

- Long Load time - Load time is normally the initial time it takes an application to start. This should generally be kept to a minimum. While some applications are impossible to make load in under a minute, Load time should be kept under a few seconds if possible.

- Poor response time - Response time is the time it takes from when a user inputs data into the application until the application outputs a response to that input. Generally this should be very quick. Again if a user has to wait too long, they lose interest.

- Poor scalability - A software product suffers from poor scalability when it cannot handle the expected number of users or when it does not accommodate a wide enough range of users. Load testing should be done to be certain the application can handle the anticipated number of users.

Bottlenecking - Bottlenecks are obstructions in system which degrade overall system performance. Bottlenecking is when either coding errors or hardware issues cause a decrease of throughput under certain loads. Bottlenecking is often caused by one faulty section of code. The key to fixing a bottlenecking issue is to find the section of code that is causing the slow down and try to fix it there. Bottle necking is generally fixed by either fixing poor running processes or adding additional Hardware. Some common performance bottlenecks are

- CPU utilization

- Memory utilization

- Network utilization

- Operating System limitations

- Disk usage

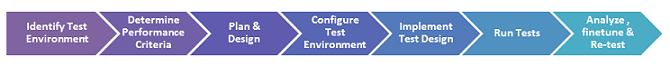

Performance Testing Process

The methodology adopted for performance testing can vary widely but the objective for performance tests remain the same. It can help demonstrate that your software system meets certain pre-defined performance criteria. Or it can help compare performance of two software systems. It can also help identify parts of your software system which degrade its performance.

Below is a generic performance testing process:

- Identify your testing environment - Know your physical test environment, production environment and what testing tools are available. Understand details of the hardware, software and network configurations used during testing before you begin the testing process. It will help testers create more efficient tests. It will also help identify possible challenges that testers may encounter during the performance testing procedures.

- Identify the performance acceptance criteria - This includes goals and constraints for throughput, response times and resource allocation. It is also necessary to identify project success criteria outside of these goals and constraints. Testers should be empowered to set performance criteria and goals because often the project specifications will not include a wide enough variety of performance benchmarks. Sometimes there may be none at all. When possible finding a similar application to compare to is a good way to set performance goals.

- Plan & design performance tests - Determine how usage is likely to vary amongst end users and identify key scenarios to test for all possible use cases. It is necessary to simulate a variety of end users, plan performance test data and outline what metrics will be gathered.

- Configuring the test environment - Prepare the testing environment before execution. Also, arrange tools and other resources.

- Implement test design - Create the performance tests according to your test design.

- Run the tests - Execute and monitor the tests.

- Analyze, tune and retest - Consolidate, analyze and share test results. Then fine tune and test again to see if there is an improvement or decrease in performance. Since improvements generally grow smaller with each retest, stop when bottlenecking is caused by the CPU. Then you may have the consider option of increasing CPU power.

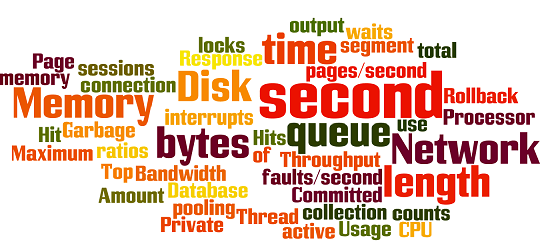

Performance Parameters Monitored

The basic parameters monitored during performance testing include:

- Processor Usage - amount of time processor spends executing non-idle threads.

- Memory use - amount of physical memory available to processes on a computer.

- Disk time - amount of time disk is busy executing a read or write request.

- Bandwidth - shows the bits per second used by a network interface.

- Private bytes - number of bytes a process has allocated that can’t be shared amongst other processes. These are used to measure memory leaks and usage.

- Committed memory - amount of virtual memory used.

- Memory pages/second - number of pages written to or read from the disk in order to resolve hard page faults. Hard page faults are when code not from the current working set is called up from elsewhere and retrieved from a disk.

- Page faults/second - the overall rate in which fault pages are processed by the processor. This again occurs when a process requires code from outside its working set.

- CPU interrupts per second - is the avg. number of hardware interrupts a processor is receiving and processing each second.

- Disk queue length - is the avg. no. of read and write requests queued for the selected disk during a sample interval.

- Network output queue length - length of the output packet queue in packets. Anything more than two means a delay and bottlenecking needs to be stopped.

- Network bytes total per second - rate which bytes are sent and received on the interface including framing characters.

- Response time - time from when a user enters a request until the first character of the response is received.

- Throughput - rate a computer or network receives requests per second.

- Amount of connection pooling - the number of user requests that are met by pooled connections. The more requests met by connections in the pool, the better the performance will be.

- Maximum active sessions - the maximum number of sessions that can be active at once.

- Hit ratios - This has to do with the number of SQL statements that are handled by cached data instead of expensive I/O operations. This is a good place to start for solving bottlenecking issues.

- Hits per second - the no. of hits on a web server during each second of a load test.

- Rollback segment - the amount of data that can rollback at any point in time.

- Database locks - locking of tables and databases needs to be monitored and carefully tuned.

- Top waits - are monitored to determine what wait times can be cut down when dealing with the how fast data is retrieved from memory.

- Thread counts - An applications health can be measured by the no. of threads that are running and currently active.

- Garbage collection - has to do with returning unused memory back to the system. Garbage collection needs to be monitored for efficiency.

CPU performance indicators:

- CPU clock speed

- CPU Cache size

- Front-side bus (FSB) speed(Intel)

Memory performance indicators:

- Throughput

Disk performance indicators:

- Input/Output Operations Per Second(IOPS)

- Bandwidth

- Throughput

- Latency - the average time for the processing of a single request

Network performance indicators:

- Availability

- Latency

- Utilization rate

- Throughput

- Bandwidth

Xen performance indicators

Currently, we do performance testing for xen guest’s CPU, Memory, Disk and Network. We mainly focus on the guest side not the hypervisor side.

We choose the following combination of tools to test xen guest performance:

- CPU - nbench

- Memory - LMbench

- Disk - fio

- Network - netperf

Performance Test Tools

There are a wide variety of performance testing tools available in market. The tool you choose for testing will depend on many factors such as types of protocol supported , license cost , hardware requirements , platform support etc. Below is a list of popularly used testing tools.

CPU

time echo "scale=5000;4*a(1)"|bc -l -q- nbench - is a synthetic computing benchmark program developed in the mid-1990s by the now defunct BYTE magazine intended to measure a computer’s CPU, FPU, and Memory System speed.

Memory

- LMbench - is a suite of simple, portable, ANSI/C microbenchmarks for UNIX/POSIX. In general, it measures two key features: latency and bandwidth.

Disk

- dd - The most simple tool used to test disk performance.

- fio - is a tool that will spawn a number of threads or processes doing a particular type of io action as specified by the user.

- iozone - is a filesystem benchmark tool. The benchmark generates and measures a variety of file operations.

- bonnie++ - is a benchmark suite that is aimed at performing a number of simple tests of hard drive and file system performance or the lack therof.

- dbench - is a tool to generate I/O workloads to either a filesystem or to a networked CIFS or NFS server. It can be used to stress a filesystem or a server to see which workload it becomes saturated and can also be used for preditcion analysis to determine “How many concurrent clients/applications performing this workload can my server handle before response starts to lag?”

- iometer —

- hdparm -

Network

- netperf - is a benchmark that can be used to measure various aspects of networking performance. Currently, its focus is on bulk data transfer and request/response performance using either TCP or UDP, and the Berkeley Sockets interface. In addition, tests for DLPI, and Unix Domain Sockets, tests for IPv6 may be conditionally compiled-in.

- iperf -

Mysql

HTTP

Java

System

Summary

Performance testing is necessary before marketing any software product. It ensures customer satisfaction & protects investor’s investment against product failure. Costs of performance testing are usually more than made up for with improved customer satisfaction, loyalty and retention.